SeeMe.ai Deployment.

This is a quick guide to deploy your trained models in just a few steps using SeeMe.ai, which allows you to easily deploy, use and share your models.

Before you begin

If you prefer to have these steps in a Jupyter Notebook, have a look at our Fast.ai Quick guides on Github.

Setup

Install the SDK

All you need to deploy your model is the Python SDK:

pip install --upgrade seeme

or in your Jupyter Notebook:

!pip install --upgrade seeme

Create a client

from seeme import Client

client = Client()

Register an account

If you have not done so already, create an account

my_password = # example: "supersecurepassword"

my_username = # example: "janvdp"

my_email = # example: "[email protected]"

my_firstname = # example: "Jan"

my_name = # example: "Van de Poel"

client.register(

username=my_username,

email=my_email,

password=my_password,

firstname=my_firstname,

name=my_name

)

Log in

client.login(my_username, my_password)

Deploy your model

Export your model for deployment

# Put your model in eval model

learn.model.eval();

# Export your model (by default your model will be exported to `export.pkl`)

learn.export()

## Alternatively, if you want to give the exported file a name

# my_custom_filename = "a_filename_to_remember.pkl"

# learn.export(my_custom_filename)

Create a model on SeeMe.ai

SeeMe.ai supports different types of AI applications with different frameworks and framework versions.

All you need to do is get the application_id like this:

import torch

import fastai

# Get the application_id for your framework (version).

application_id = client.get_application_id(

base_framework="pytorch",

framework="fastai",

base_framework_version=str(torch.__version__), # or pass the version like a string: "1.6.0"

framework_version=str(fastai.__version__), # or pass the version like a string: "0.0.26"

application="image_classification"

)

Next, you create your model on SeeMe.ai

model_name = "My Model name"

description = "Created to be used..."

classes = "Cats and dogs"

my_model = client.create_full_model({

"name": model_name,

"description": description,

"classes": classes,

"application_id": application_id

})

Quick note: to see all the supported applications, platforms and versions:

from pprint import pprint

pprint(client.applications)

Upload your model

client.upload_model(my_model["id"], "folder/to/model")

# Or, if you exported the model with a custom filename

client.upload_model(

my_model["id"],

folder="folder/to/model/",

filename=my_custom_filename

)

Add a logo (Optional)

client.upload_logo(

my_model["id"],

folder="folder/to/image",

filename="image_name.png") # or ".jpg"

Use your model

Once your model is deployed, you can use it in a number of ways:

Python SDK

You can now use the Python SDK to make predictions from basically anywhere, provided you have:

- SeeMe SDK installed

- Login credentials

- The ‘id’ of a deployed model

- An image to classiy

image_location = "data/images/image_to_predict.png"

result = client.inference(my_model["id"], image_location)

Print the results

print(result["prediction"])

print(result["confidence"])

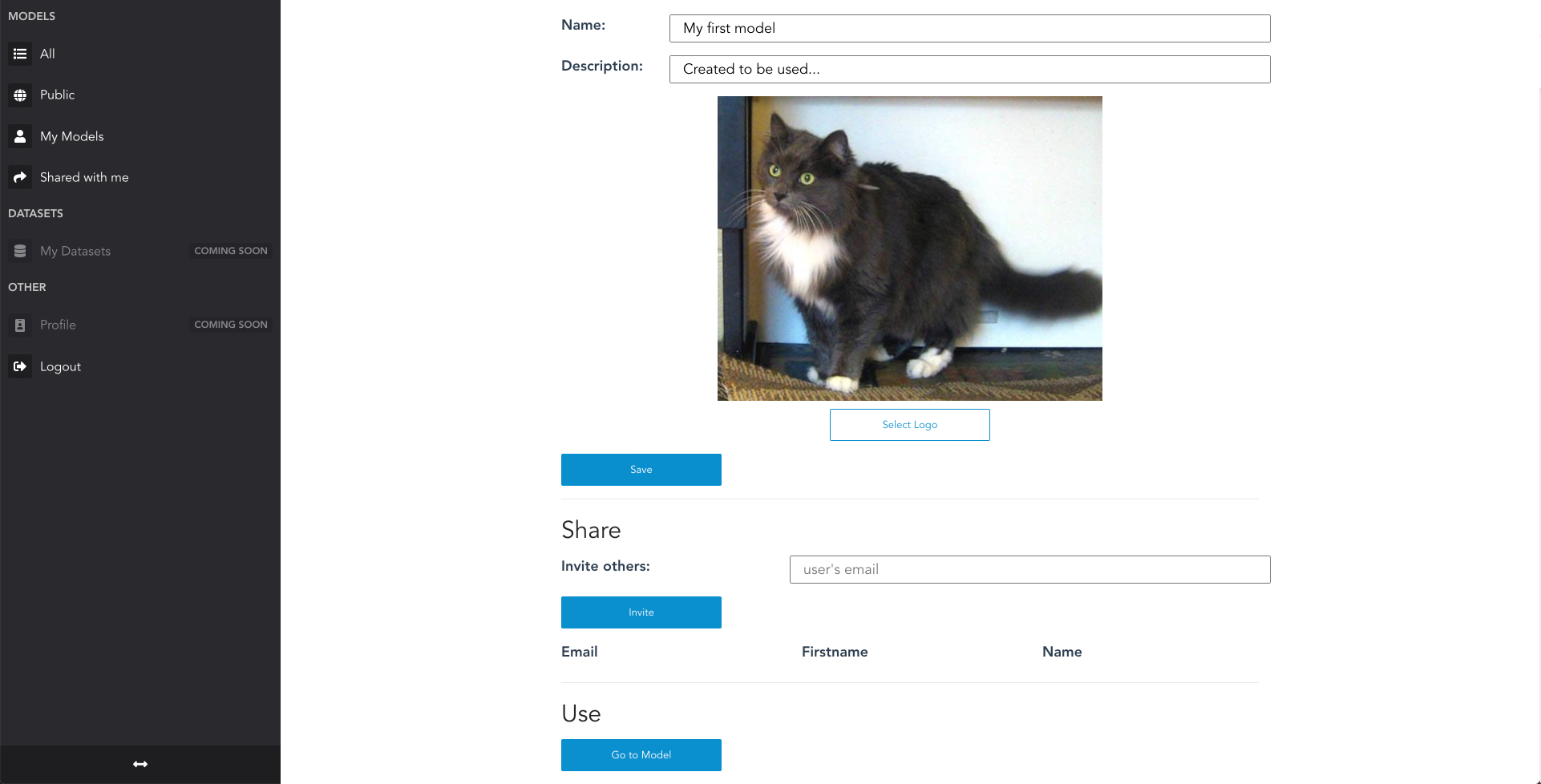

On the web

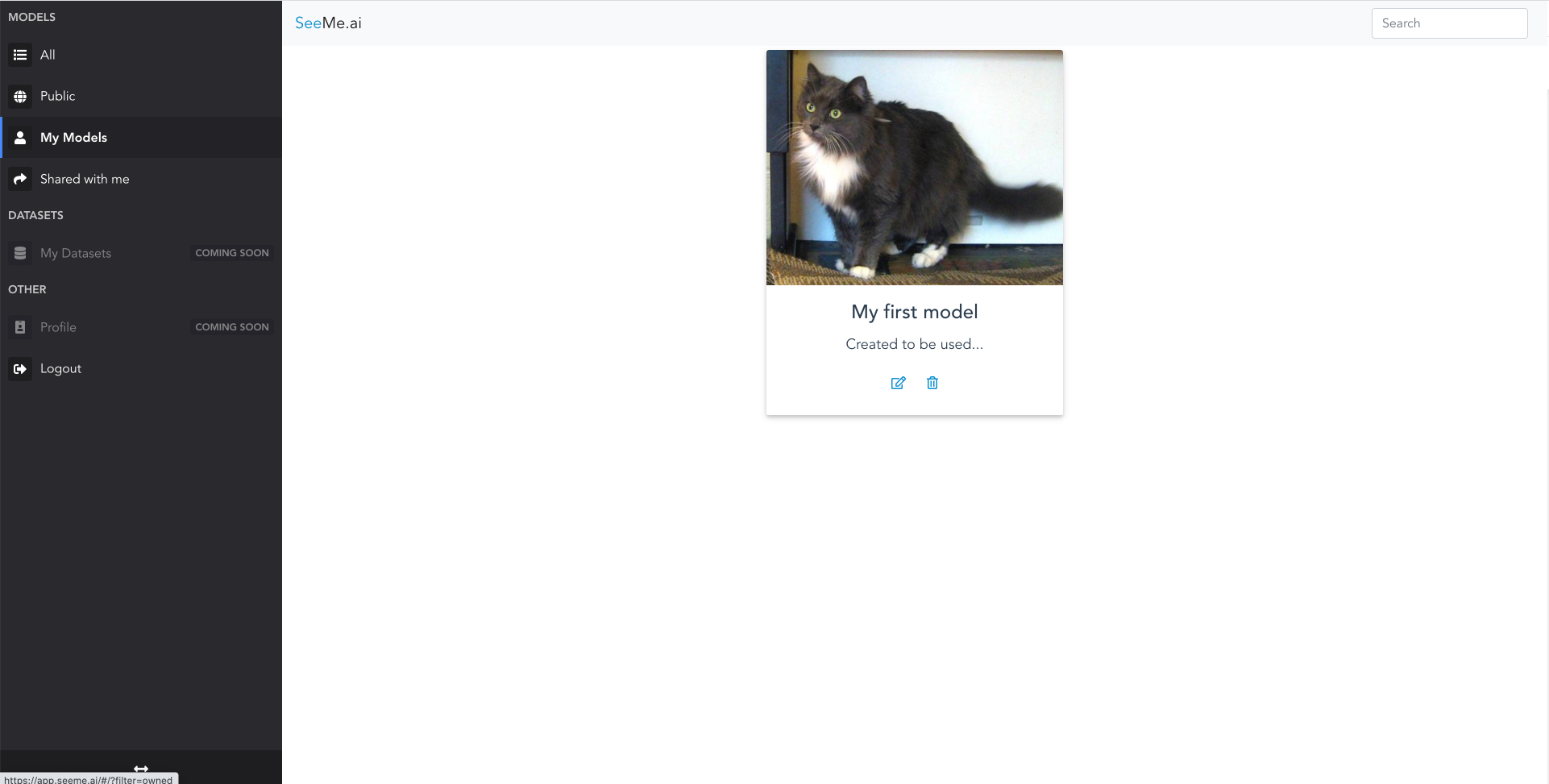

You can also open the web app via app.seeme.ai and log in with your credentials.

You will see an overview of all your models as well as the public models that can be used by anyone.

Click on the model to start making predictions.

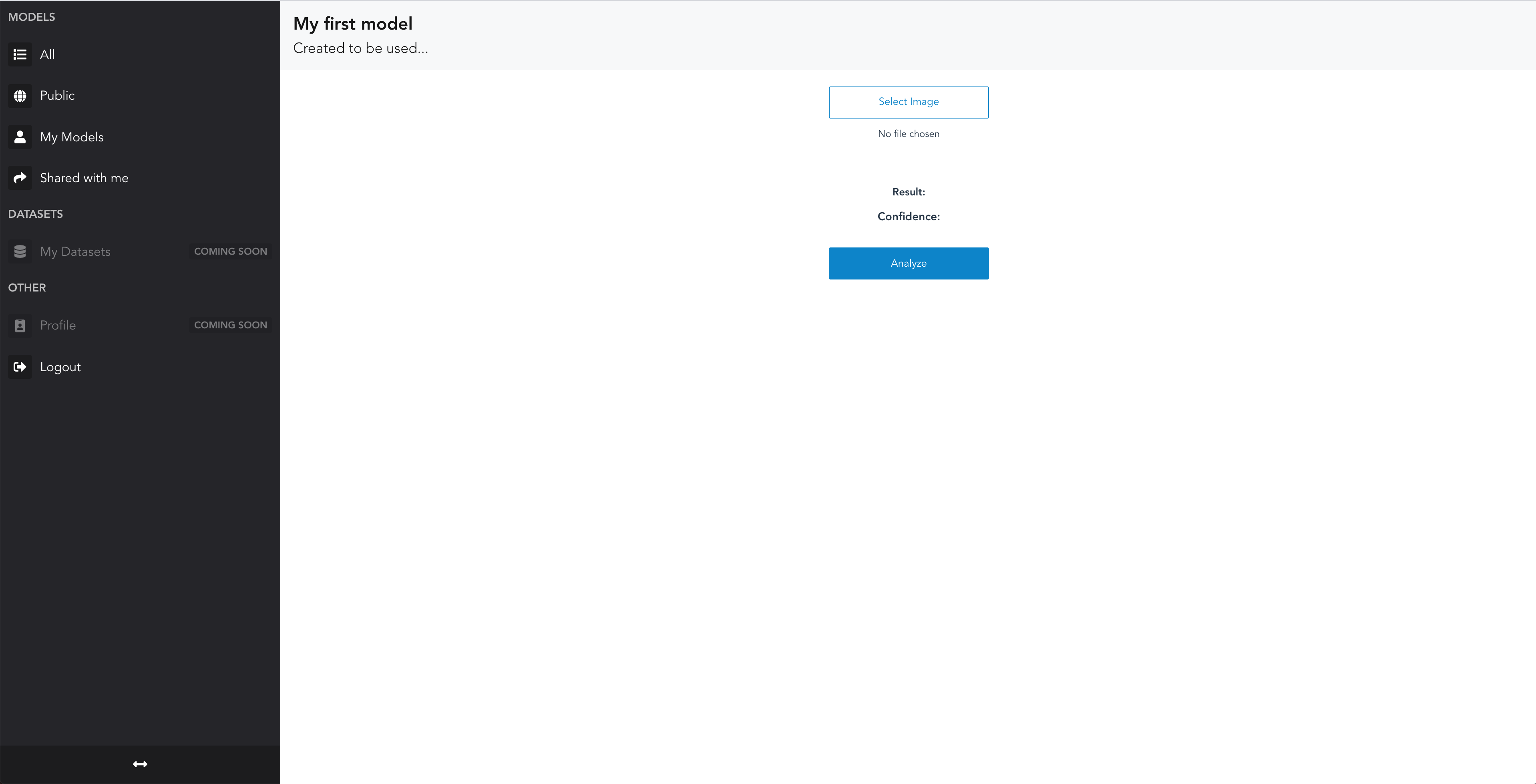

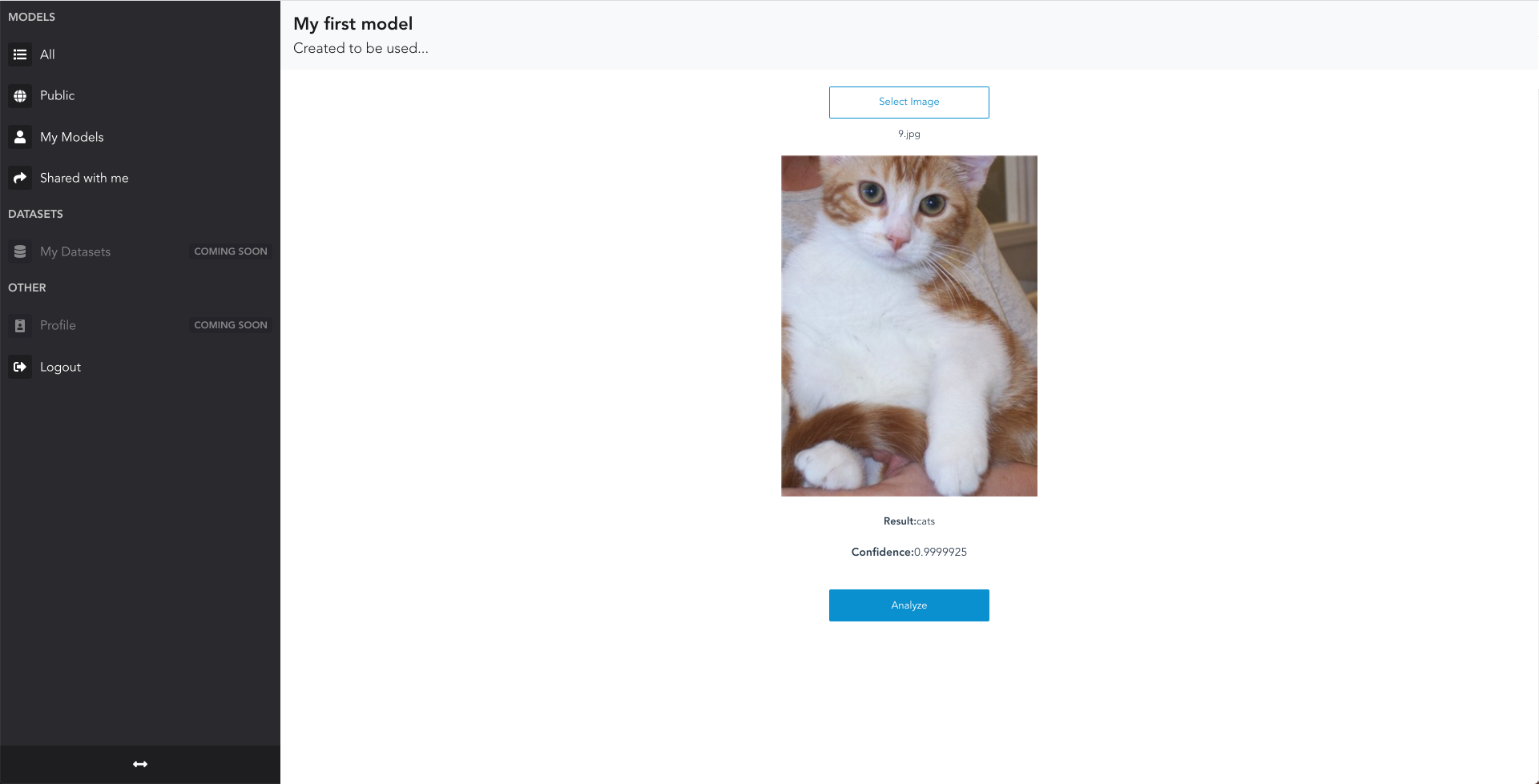

Here is what the detail screen looks like:

Next:

- click on

select image - find an image you would like to classify

- click on analyze

- Look at

resultandconfidenceto see what the prediction is.

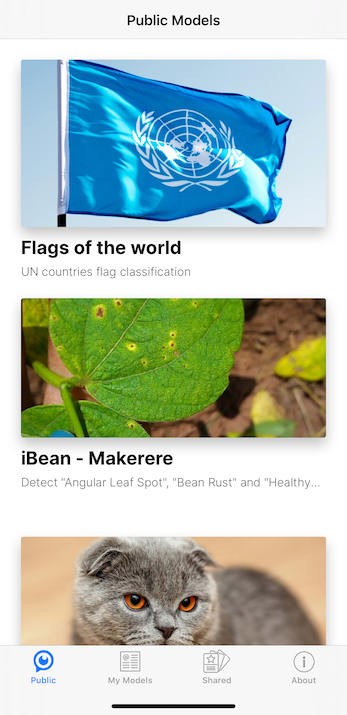

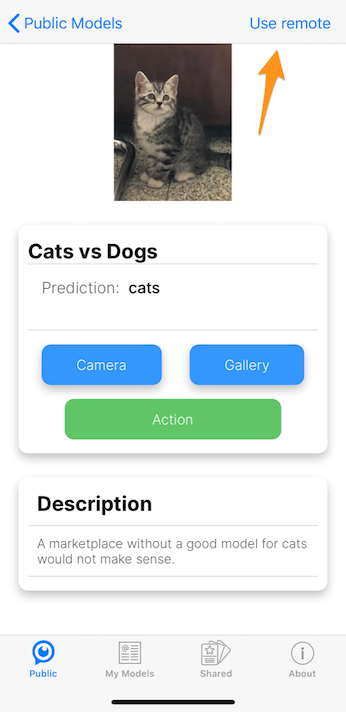

iOS/Android

You can also access all models - yours, shared, and public - on our native mobile apps. You can install them from the iOS App Store and Android Play Store.

Once you have logged in, you see the overview of models:

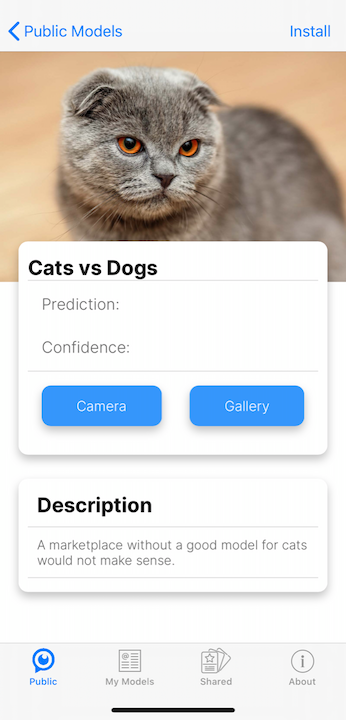

On the model detail, you can take pictures with the camera or select from the gallery:

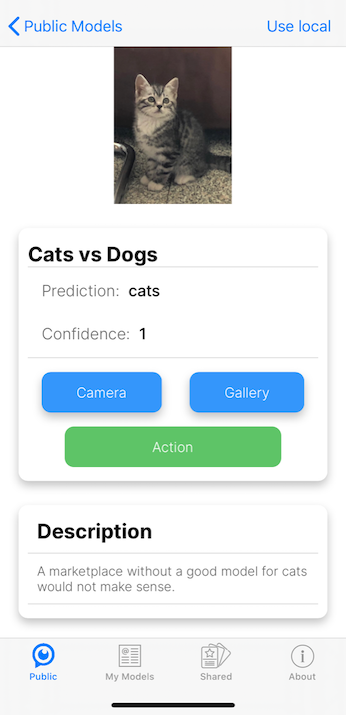

And see what your model thinks:

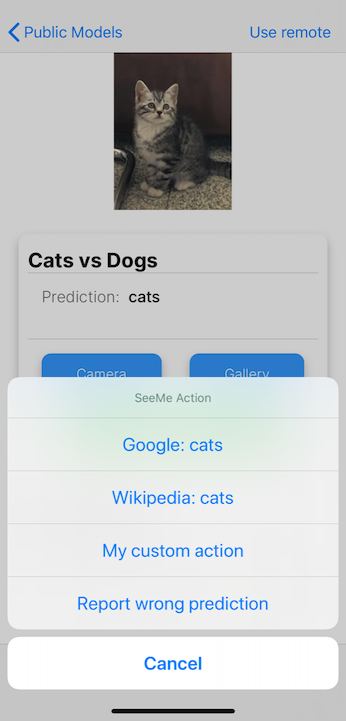

Once your model has made a prediction, you will see a green button name “Action”. When clicking that button, you have a number of choices:

- search Google for your prediction

- search Wikipedia for your prediction

- Report a wrong prediction

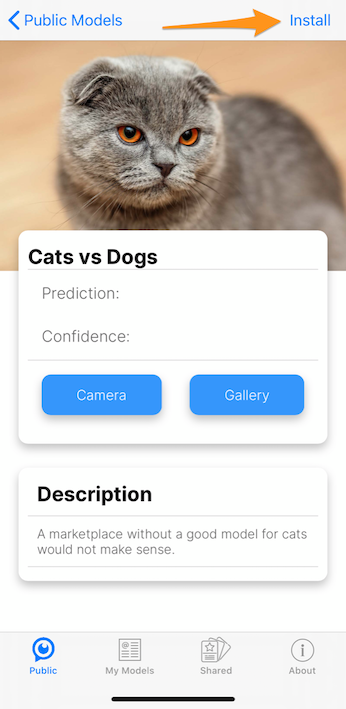

When you upload your trained model, SeeMe.ai automatically converts it to ONNX and Core ML, which enables you to install and use your AI Model on your device, even when you are offline. This feature is currently in preview.

When the model is available to be installed, you will see the install button on the top right:

Once installed, you can still switch between using the offline or online version of your model:

The screenshots above are all taken on iOS, however, on Android all these features work similarly, with one exception: Android offline models are still in development.

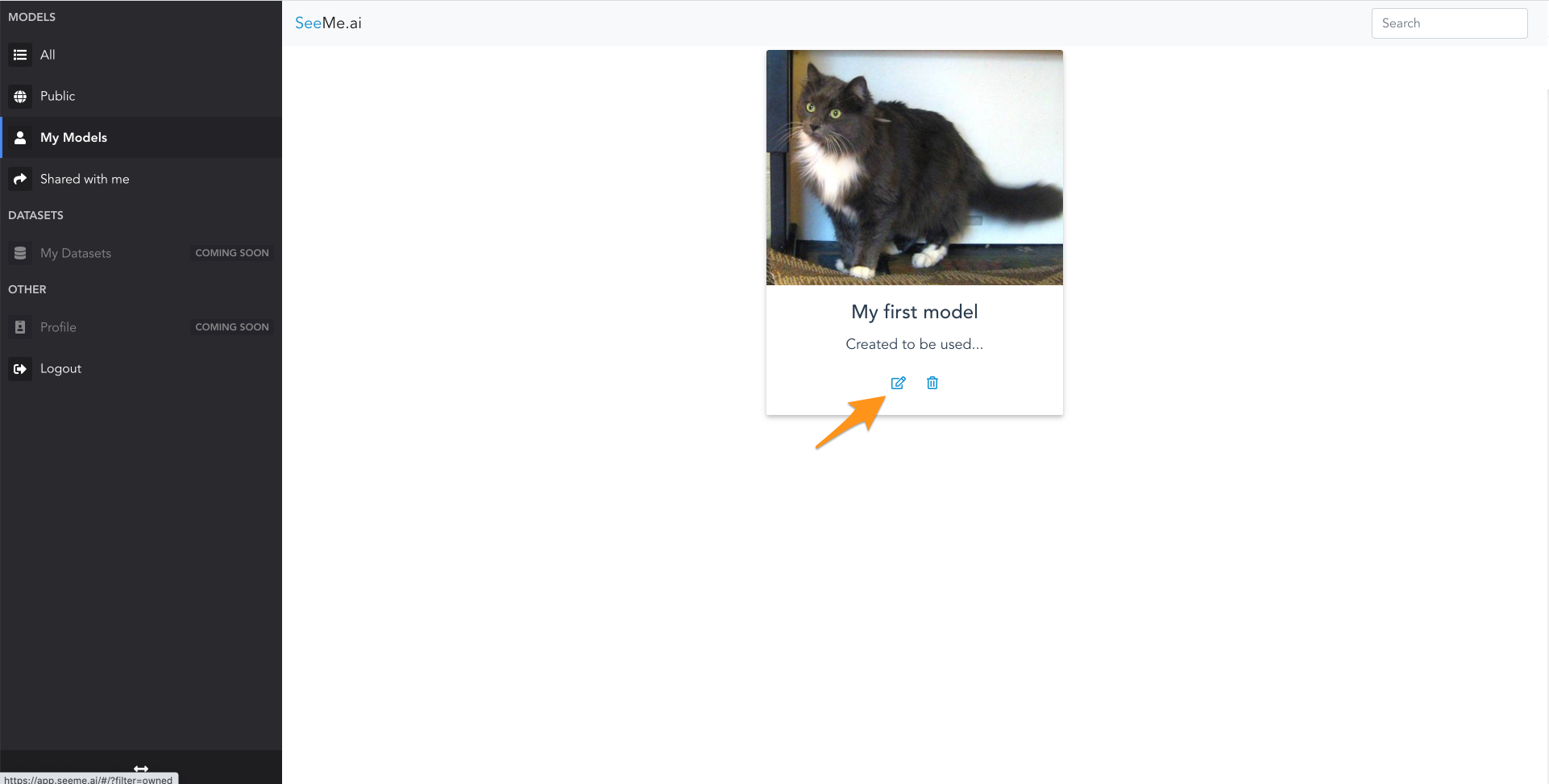

Share your model

Once you have tested your model, it is time to share it with friends.

Go back to the home page, and click the edit icon.

You will go to the model detail screen:

There you can invite people by entering their email address.

Once invited, they will receive an email to either register (if that email is not yet associated to an account) or to notify them of your model being shared with them.

Pricing

For pricing details, check the pricing page.

Support / Feedback

For feedback, questions or problems, just send an email to [email protected].